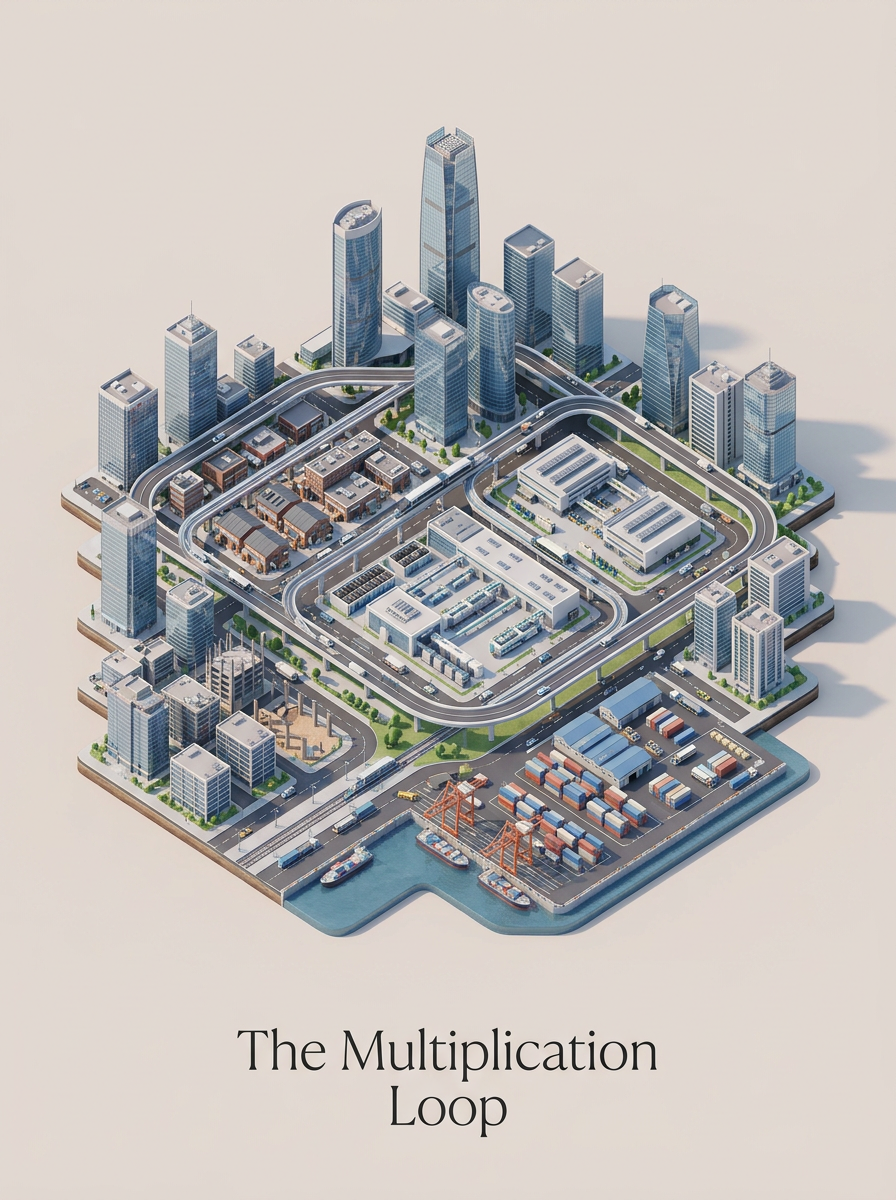

The Multiplication Loop

From Idea to Product With Less Waiting

The easiest way to overstate AI productivity is to talk about one task in isolation. Drafting is faster. Coding is faster. Summarizing is faster. Fine. But the bigger change shows up when the whole chain gets shorter.

I noticed this most clearly on small projects where I controlled the loop end to end. MemoriA, the insurance triage benchmark, hf-papers-trends, the Crunchbase profile generator - different projects, same pattern. The win was not that any one step became miraculous. The win was that fewer steps had to wait for the previous step to become formal.

The idea could become a sketch. The sketch could become a repo change. The repo change could become a local test. The local test could become a deployment. The deployment could produce real feedback. Then the next loop could start while the context was still warm.

That is where the multiplication happens.

The Spark

Most useful projects start badly. Not stupidly, just incompletely.

The insurance benchmark started as irritation: why are so many agent evaluations built around tasks that do not resemble the operational reasoning I see in insurance? MemoriA started as a product feeling: memory should make an AI companion feel more continuous without turning it into a surveillance diary. hf-papers-trends started as curiosity about whether paper trends could be classified and forecasted without becoming fake analytics.

None of those starting points was a spec.

The first job of the loop is to protect the spark without worshipping it. A spark deserves exploration, not loyalty. I write the rough thought down, ask the tool to challenge it, generate edge cases, and look for the smaller question underneath. Sometimes the idea dies there. That is a good outcome.

Premium chapter

Keep reading the full chapter

Enter the access code to open the complete chapter and keep your place here.