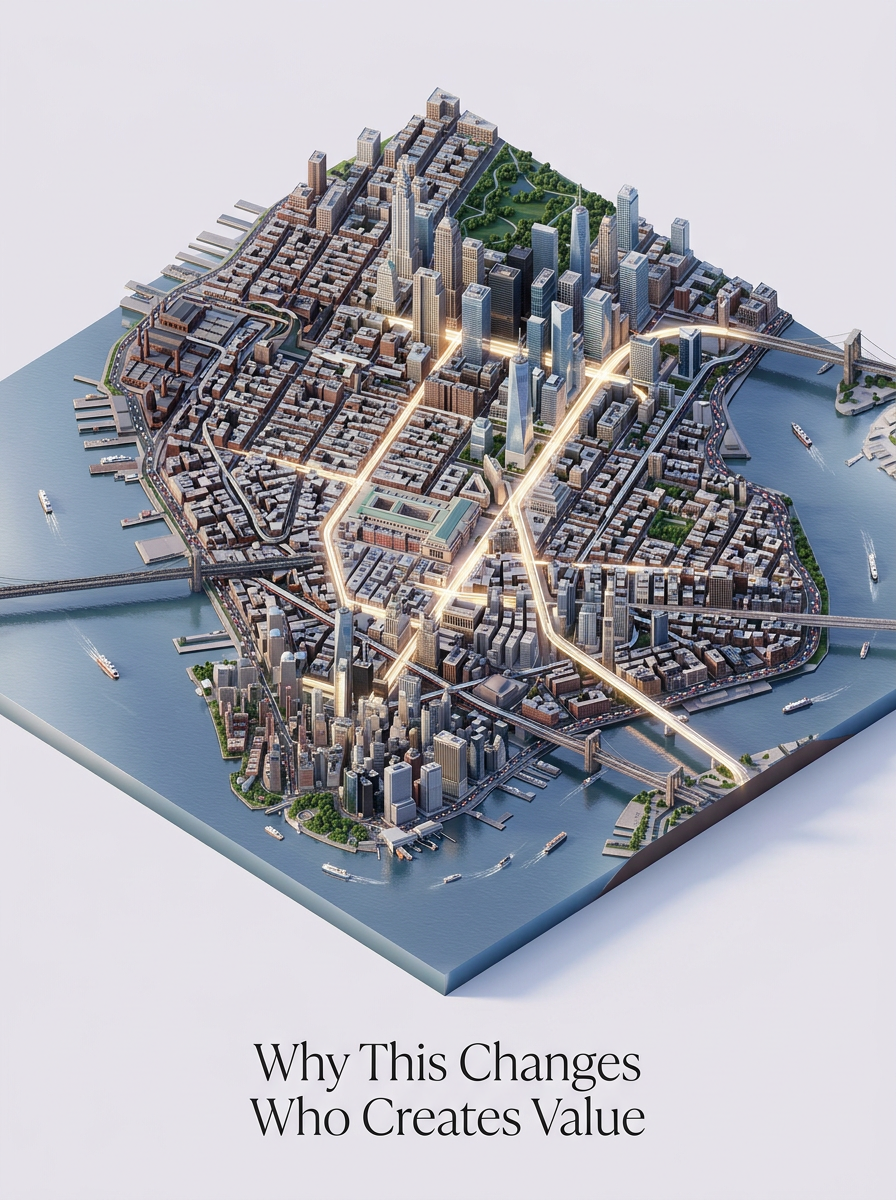

Why This Changes Who Creates Value

The Bottleneck Is Moving

The most important thing a new tool changes is often not the task itself. It changes whose judgment becomes actionable.

I have seen this in small projects more clearly than in big announcements. When the cost of a first prototype is high, the person with the idea has to spend most of their energy persuading the system to let the idea be tested. When the cost drops, the same person can bring back an artifact: a screen, a workflow, a script, a small benchmark, a rough demo. The conversation changes because the object changes.

Old organizations often made execution feel like railway scheduling. You could have the right idea and still wait for the right track, the right train, the right platform, the right person to translate your intent into the next format. By the time the work moved, the original signal had usually been diluted.

AI does not remove that whole structure. It does make the first stretch shorter. And that is enough to move value.

Cheap Output Is Not the Same as Good Output

There is a lazy version of this argument that says, "AI makes everyone productive, therefore everyone creates more value." I do not believe that.

The evidence is already mixed. In some environments, like customer support, generative AI assistance has produced meaningful average gains, especially for less experienced workers. In other environments, especially mature software work with experienced developers, the tool can slow people down if it adds review burden, context switching, or misplaced confidence.

That matches my own experience. A coding agent can save a day. It can also cost an afternoon by producing a confident diff that almost fits. The productivity gain is not automatic. It depends on whether the work is framed well, whether the output can be checked, and whether the human knows where to distrust it.

So the shift is narrower than the hype, but still large: when early artifacts become cheaper to produce and easier to inspect, relevance gets more valuable. The person who knows what should be tested now has more leverage than before.

Framing Becomes a Form of Production

I used to think of framing as the thing before the work. Now I think of it as part of the work.

Take the insurance triage benchmark I have been building. The hard part was not only generating 220 records. The hard part was deciding what the benchmark should prove, which lines of business mattered, what the schema should force, and which failure modes would expose weak agent reasoning. Once those decisions were made, agents could help produce, validate, and audit records. Before those decisions, more generation would have just created more cleanup.

This is the pattern I keep running into. If you frame the problem badly, AI helps you move quickly in the wrong direction. If you frame it well, the same tools become useful because they have a shape to work inside.

That makes problem framing less like a soft prelude and more like load-bearing structure.

Translation Loss Used to Hide the Real Work

In the older workflow, an idea often passed through too many languages. A business person described a need. A product person turned it into requirements. A designer turned requirements into screens. An engineer turned screens into implementation. A reviewer found what had been lost along the way.

Some of that process was necessary. Some of it was just meaning getting worn down each time it changed hands.

The loss was not only semantic. It was emotional and contextual. The tiny irritation that made the problem worth solving disappeared. The thing a user would never say in a requirements document but always feel in the workflow disappeared. The operational constraint everybody in the room knew but nobody wrote down disappeared.

When high-context people can make rough artifacts themselves, less of that context has to survive the full handoff chain before it becomes visible. The artifact can carry the nuance earlier. It can also reveal where the author's understanding was naive.

Both outcomes are useful.

The First Move Now Teaches More

The first move in a project used to be expensive enough that organizations tried to make it perfect. That created a strange behavior: people over-explained before they had enough evidence.

Cheap prototypes reverse the order. You can build a small wrong thing, learn why it is wrong, and then decide whether the idea deserves more seriousness. This is not an excuse for sloppy engineering. It is a better way to avoid fake certainty.

In MemoriA, the first memory prototype taught me more by failing than the concept note had taught me by sounding coherent. The product question shifted from "Can we store memories?" to "Which memories should this product be allowed to retrieve, and under what kind of user control?" That is a better question. It came from the artifact.

This is where value starts to move. People who can turn fuzzy context into a testable first move begin shaping the agenda earlier.

More People Can Convert Judgment Into Action

This is not only a software story. A strategist can test a market narrative with a generated landing page and a small research workflow. A consultant can build a data-cleaning assistant for one messy client process before proposing a transformation program. An operations lead can mock a dashboard that exposes the real bottleneck instead of waiting for a reporting roadmap.

The common thread is not that these people become full-stack engineers overnight. They become better initiators. They can bring judgment closer to action.

That matters because initiation shapes learning. The person who starts the experiment decides what the organization gets to see first. If only technical queues can initiate, the learning surface is narrower. If more context-rich people can initiate, the organization sees more problems in artifact form.

Some of those artifacts will be bad. Good. Bad artifacts are often better than invisible assumptions.

Specialists Still Matter

There is a boundary that this book needs to keep clear. Faster initiation does not replace specialist depth.

Architecture still matters. Security still matters. Reliability, integration, cost control, observability, accessibility, maintainability - all of it still matters. A quick prototype can show value, but it cannot excuse a weak production system. In fact, the prototype may create new obligations by convincing people too early.

The useful change is in timing. Specialists no longer have to be the only people allowed to touch the first version. They can enter when there is something concrete to critique, harden, simplify, or reject.

That can make their work better. I would much rather ask a serious engineer to review a working prototype than ask them to interpret ten pages of aspirational requirements.

How Organizations Will Misread This

Some organizations will treat this shift as a headcount reduction story. I think that is the least interesting and often the most damaging interpretation.

The better reading is that the organization can run more real experiments with the same amount of human attention, provided it also improves review. The machine can produce more candidates. Humans still need to decide which candidates deserve trust.

If leadership only asks, "How much faster can we produce?" the system will drown in plausible work. If it asks, "How do we turn more context into better tested decisions?" the tools start to pay off.

The difference sounds subtle. It is not. One path creates output volume. The other creates learning.

Where Value Moves

When implementation gets cheaper at the front, value moves toward people who can choose the right first implementation.

That includes engineers with taste. It includes product people with operational context. It includes founders who can stay close to the artifact. It includes domain experts who know where generic solutions usually fail. It includes curious operators who are willing to learn enough of the toolchain to make their judgment visible.

The center of gravity is not "everyone codes now." The center of gravity is "more people can make claims testable."

That is a quieter claim than the usual AI rhetoric. It is also the one I trust.